Matchmoving is a term term loosely used for recreating a virtual camera that reproduces the movements and characteristics of a real camera in order to be used in a 3d software package or compositing software. The goal is to combine the originally shot material with computer generated images in a flawless manner, so that it appears to be all shot within one take.

There are several things that have to be considered in order to ensure to get satisfactory results:

-camera data

-measurements

-lens distortion

-tracking points

-rolling shutter

-parallax

-be sure to keep good records and measurements on set:

Camera: lens, aperture, film back, zoom, height, approximate movement

Also set measurements, relative movement of camera are good to have as a guideline. Be sure to take reference photographs so that you still have an impression of the local set and its surrounding. Measurements of distances and the size of certain reference objects will help you to establish a proper scale for your virtual camera.

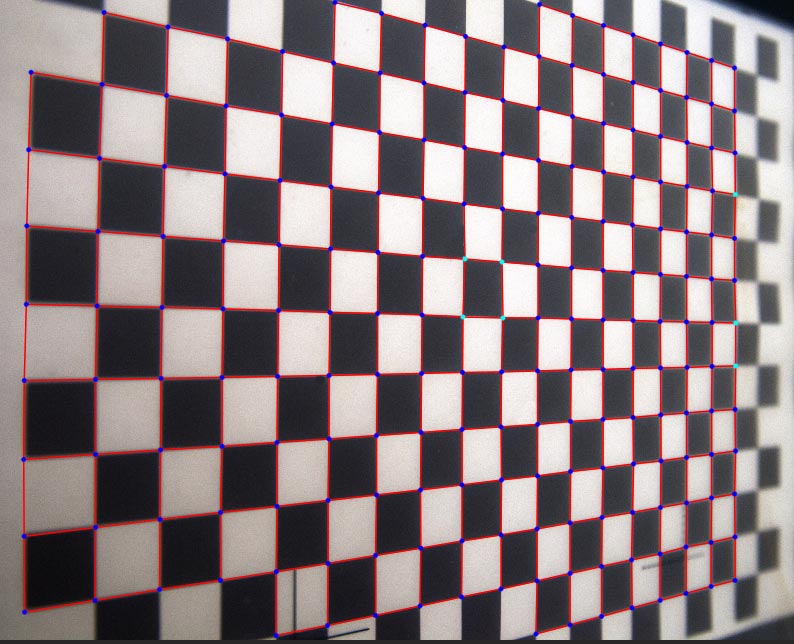

-Lens distortion: every lens has some kind of distortion although the better and more expensive the lens kit, the less distortion it should have. To be on the sure side, I would recommend always to shoot a reference grid (checkerboard shot from front or diagonally to calibrate distortion and lens characteristics, like depth of field) If you create 3d elements be sure to use those lens characteristics to introduce the same distortion to your cg footage before compositing (or theoretically you could also undistort your original footage, composite and then reintroduce distortion).

example for a lens distortion grid for recreating a “virtual lens”:

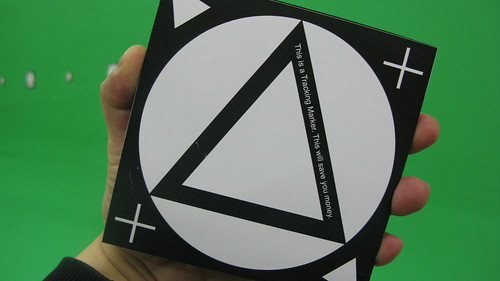

-tracking: Besides measurements and camera characteristics, good tracking points are the most important bases for a satisfying matchmove. Either you are lucky and there´s enough visual patterns in your footage that stay in place and can be tracked properly or you have to add markers to guarantee a proper track. When adding markers you should take care of several things:

shape: while shot from a distance a tennis ball can be a perfect marker at an outdoor shooting in other cases it can be a problem, because of its round shape that does not give away any hint on perspective. A variety of tracking marker shapes can ensure that you have enough visual data for tracking. Be aware that if you place too many tracking markers, you´ also have to remove them, so strategically place them on areas, where it´s easy to remove them later. Also, unless you try to matchmove an object, tracking points should be stationary.

Examples for tracking markers:

Warning: there are several tracking patterns that are not suitable for tracking and they might ruin or disrupt your solve:

-Highlights and reflections: they travel on the surface and are not suitable for tracking

-images in a mirror

-adjacent edges that meet in perspective. (for example two buildings that overlap, but in reality are behind each other)

-parallax: parallax can be described as the effect when a camera moves, the objects in the foreground move a greater distance than objects in the back. Due to the nature of how a camera move is calculated, parallax is an important factor for a good solve. Having a great deal of parallax in your shot will improve your matchmoving solution:

(background info on how a matchmove is calculated:

“…“camera-matching software utilizes a subset of projective geometry called epipolar geometry. This branch of mathematics is used to describe the geometric relationship between two optical systems viewing the same subject and can be used to locate points in space. Because a moving camera offers a new view every frame, epipolar geometry works for a single moving camera as well, and each new view is understood as a separate optical system.”

The goal of a 3D tracking program is to solve the camera position by using two camera views. “Think triangulation,” writes Katz. Epipolar geometry is a type of triangulation. When using photographs to determine the position of a point on an object using triangulation, it’s necessary to match the image location point in one image to the image location point in a second image. Matching these pair of points in two images is called correspondence. Finding these matches would appear to require searching the entire image. Epipolar geometry proposes that the point we are interested is actually constrained to a single line. This greatly limits the search for that point.” By using the path of the point to define a line, one can predict where a point is going and greatly reduce the amount of computation and hence speed up the tracking process.” source: www.fxguide.com/featured/art_of_tracking_part_2_tips_and_apps_overview/

-rolling shutter: when shooting with cmos based sensors, it might be necessary to remove the rolling shutter effect using a plugin.

As for shooting with dslr a shutter speed of 1/50 is recommended for most film like recordings. I found that at that shutter speed if shot handheld some micro jitter can result in smeared images that can be hard to be tracked. Of course you can shorten the shutter speed to get sharper images, but beware that this also affects the look of your video and this should be more an esthetic choice than an aesthetic one.

Here are some examples of matchmoving shots i did:

Recent Comments